About Me

AI Engineer based in Nairobi, Kenya. I build and deploy self-hosted LLM systems — fine-tuning, quantization, RAG, and multi-agent pipelines. Currently at Supreme AI building AI-powered products for the Australian market.

I also build AI tools independently — TradingAgents (a 13-agent NSE trading framework) and an LLM-powered financial auditor. MSc Computer Science from DeKUT with 3 IEEE publications. Full stack: Python, LangGraph, FastAPI, React, Next.js.

LLM Systems

Backend

Frontend

ML Foundations

GitHub Activity

Experience & Education

AI Engineer

Supreme AI — Australia (Remote)

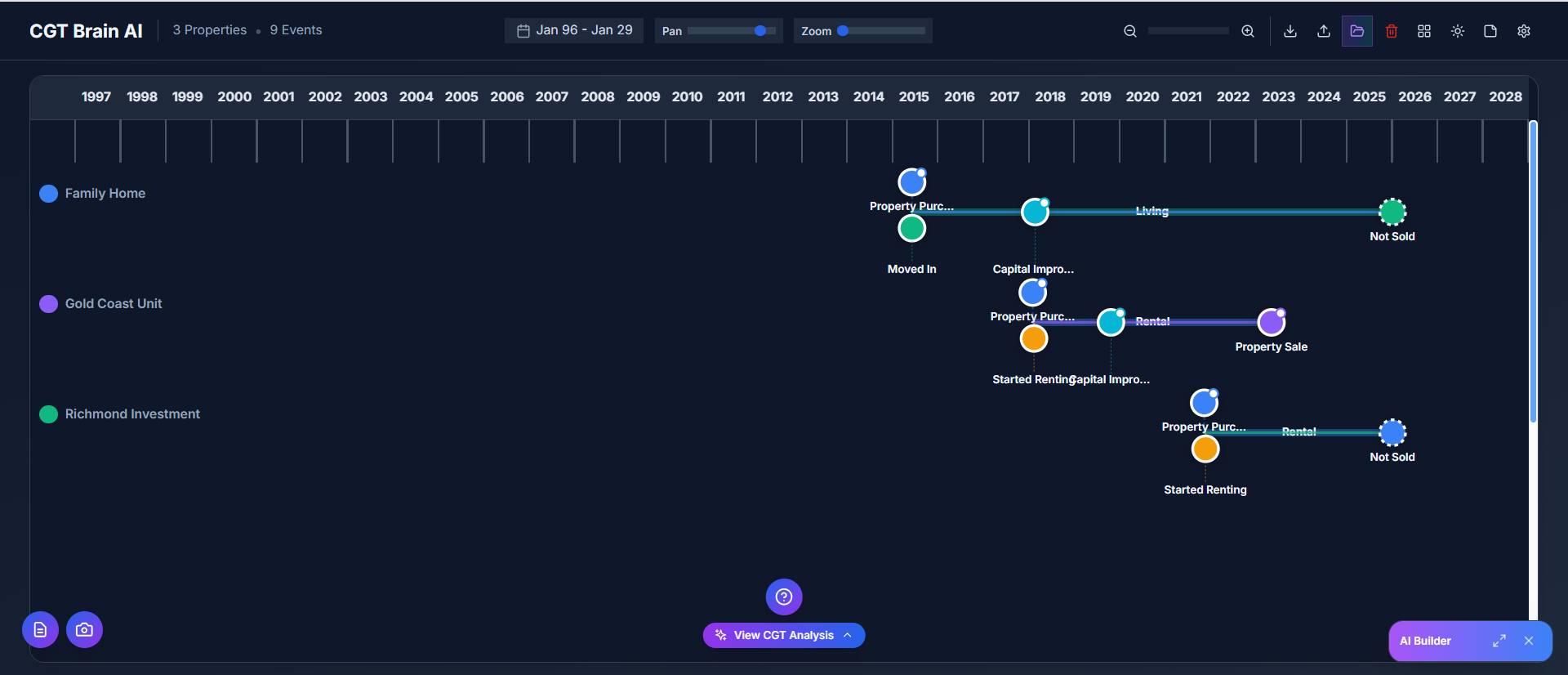

Building AI-powered products for the Australian market including CGT Brain (capital gains tax engine) and an AML/CTF compliance system. Fine-tuning, quantization, and deploying self-hosted LLMs via vLLM.

Independent AI/ML Researcher

Nairobi, Kenya

Implementing global AI research papers for African markets. Built TradingAgents (multi-agent NSE trading system) and an LLM-powered financial auditor. 3 IEEE publications.

Master's Degree, Computer Science

Dedan Kimathi University of Technology (DeKUT)

Research focus: Medical imaging with deep learning, NLP topic modeling, financial health assessment using ML. 3 IEEE publications.

BSc Information Technology

Dedan Kimathi University of Technology (DeKUT)

Second Class Upper Honours.

Projects

CGT Brain

An AI system that does what lawyers and accountants do for Capital Gains Tax. Feed it any property timeline and it applies ATO rules, performs full CGT analysis, and generates detailed reports — work that traditionally takes professionals hours, done in seconds.

Biashara Buddy

An AI-powered business consultant that helps Kenyan entrepreneurs with budgeting, licensing requirements, business ideas, and location selection, everything a qualified business advisor can do.

AI Research in a Kenyan Context

Implementing global AI/ML research papers to solve local problems.

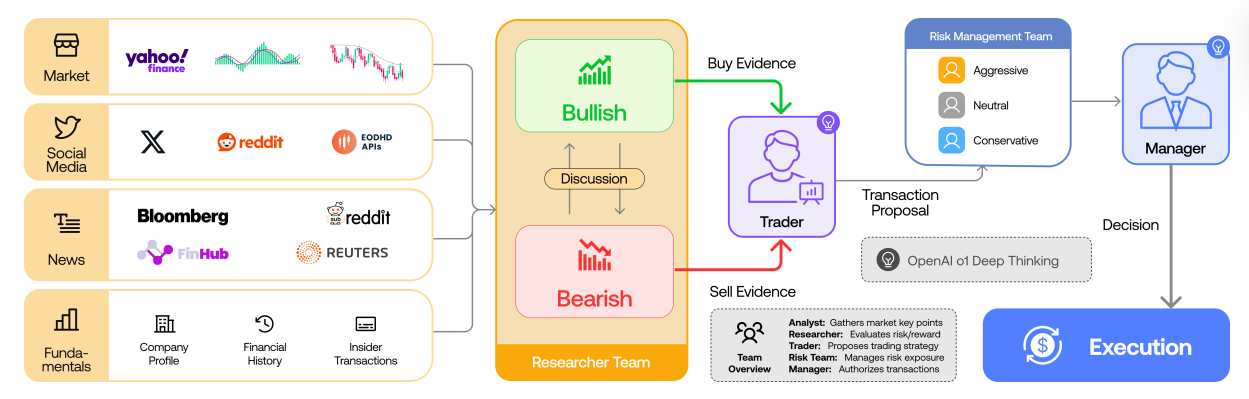

TradingAgents

Multi-agent LLM trading system for the NSE, implementing the TradingAgents paper (UCLA/MIT). Four parallel AI analysts debate and synthesize market data, fundamentals, news, and sentiment to produce trading decisions — adapted for NSE constraints like T+3 settlement, KES commissions, and frontier market illiquidity.

Based on arXiv:2412.20138

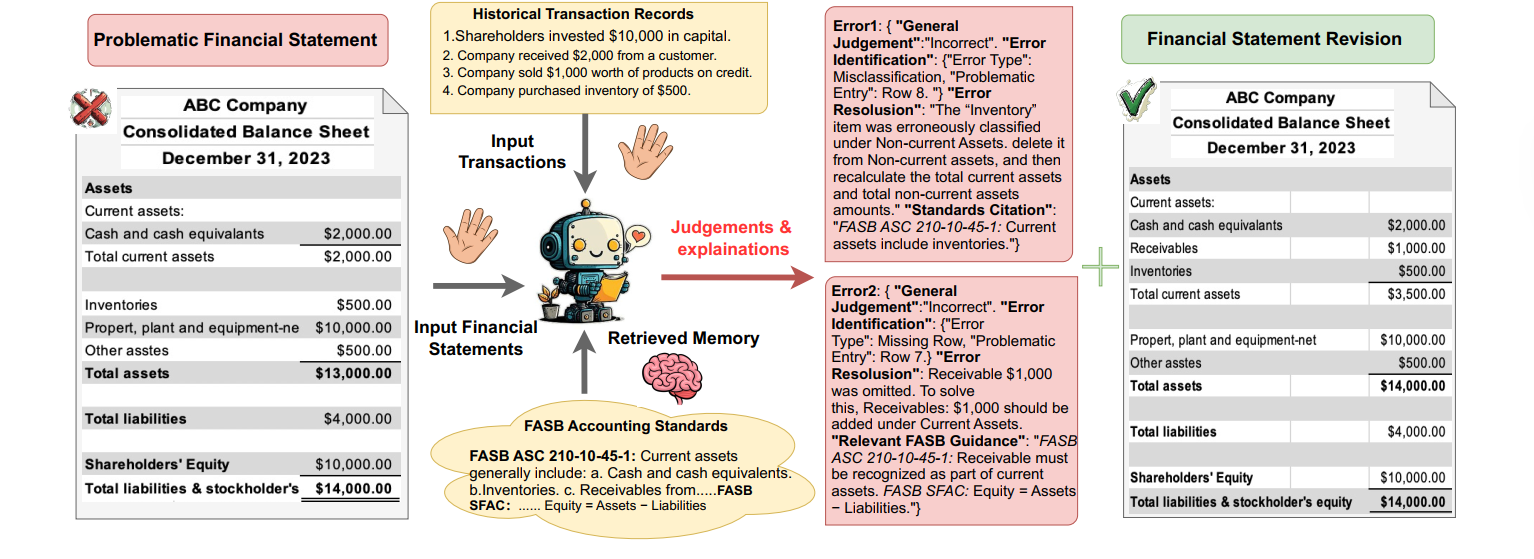

Automating Financial Statement Audits with LLMs

An LLM-powered auditing system that ingests Kenyan company financial statements (PDFs), chunks and embeds them with RAG, and produces structured audit reports — flagging IFRS violations, material misstatements, and going-concern risks. Built to handle the nuances of Kenyan regulatory filings (CMA, NSE listing rules, Companies Act 2015).

My Publications

Selected research contributions in data science and technology for development.

Automatic Detection and Classification of Gastrointestinal Pathological Findings using a Hybrid ResNet50-CNN from Endoscopic Images

Kinyanjui, Samson, Juliet Moso and Patrick Gikunda.

2025 IST-Africa Conference (IST-Africa), 2025.

Topic Clustering of COVID-19 Medical Literature Using LDA and K-Means: A Case Study

Kinyanjui, Samson and Benson Kituku.

IEEE International Conference on ICT4DA, Bahir Dar, Ethiopia, 2025.

Financial Health Assessment for Households in Kenya

Kinyanjui, Samson, Felix Lopuran, Peter Kimanga, Edna Mugoh, Dennis Kiprotich, and Patrick Gikunda.

IEEE International Conference on ICT4DA, 2024.

Latest from the Blog

AI/ML paper breakdowns, implementations, and research notes.

Part 10: Scaling AI Systems

The series finale: concurrency patterns, caching at every layer, structured logging, monitoring, graceful degradation, and a shipping checklist.

Read moreRunning a 22B Audio-Video Diffusion Model on a Single GPU

Fitting a 22B audio-video diffusion model into a GPU budget shared with an LLM stack — FP8 quantisation, tiled VAE decode, subprocess VRAM isolation.

Read moreDesigning a Consumer-Tier AI Product That Degrades Gracefully

The default LLM product pattern propagates inference failures to the user. For consumer purchase flows, that's the wrong default. Here's the inversion.

Read moreBuilding a Hybrid-RAG Assistant That Doesn't Hallucinate Statute

A chat assistant grounded in ~1,600 chunks of primary legislation — hybrid retrieval, careful chunking, and the parser bug that taught me to validate chunks first.

Read moreGenerating Long-Form Compliance Documents with a Single LLM Call Pipeline

Why big-prompt generation breaks at scale, and how I rebuilt my document generator as a pipeline of small typed LLM calls.

Read morePart 9: Knowledge Distillation

Distil a fine-tuned 4B teacher into a 1.5B student via synthetic data — with quality-control filters and an honest eval against the teacher.

Read morePart 8: Serving Quantized Models with vLLM

From AWQ checkpoint to a production endpoint. Multi-LoRA hot-swapping, prefix caching, and swapping the local endpoint into the Part 5 RAG.

Read morePart 7: Quantization for Deployment

14 GB FP16 down to 4 GB AWQ with sub-1% accuracy loss. Full pipeline from merged checkpoint to AWQ + GGUF artefacts, with benchmarks.

Read morePart 6: Fine-Tuning with LoRA and QLoRA

QLoRA + Unsloth on a free Colab T4. Dataset prep, hyperparameters, training, evaluation, common failure modes — end to end.

Read morePart 5: Building a Production RAG System

Bolting search onto an LLM endpoint isn't RAG yet — it's the first 30%. Chunking, hybrid retrieval, prompt construction, citations, and eval.

Read morePart 4: Embeddings and Vector Search

How embeddings work, which model to pick, and how to add semantic search to the FastAPI service from Part 3.

Read morePart 3: Building APIs with FastAPI

Wrapping the Part 2 client in a production FastAPI service: validation, streaming, auth, rate limiting, and a Dockerfile.

Read morePart 2: Your First LLM API Call

From four lines of OpenAI SDK to a production-grade client wrapper with retries, streaming, structured output, and cost tracking.

Read morePart 1: Python Foundations for AI Engineers

The Python skills that actually matter when you start building AI systems — type hints, async, context managers, and tests.

Read moreHow I Build AI Agents That Actually Work in Production

The patterns, tools, and pitfalls I've learned from building multi-agent systems that go beyond demos.

Read moreHow I Get the Best Out of My GPU Using vLLM for Local LLM Production

How PagedAttention achieves 14-24x throughput over HuggingFace Transformers, with deployment code.

Read moreDemystifying LLM Quantization: GPTQ, AWQ & GGUF

How to shrink a 14GB model to 4GB and still get usable results — a practical guide with Python code.

Read moreBuilding TradingAgents for the NSE

From UCLA/MIT research paper to a working multi-agent LLM system that debates, analyzes, and trades NSE equities — adapted for frontier market constraints.

Read moreAutomating Financial Statement Audits with LLMs

From research paper to production app — building an LLM-powered auditor for Kenyan company financial statements with RAG, FastAPI, and Next.js.

Read moreUnderstanding Attention Is All You Need

A deep dive into the Transformer architecture that revolutionized NLP and became the foundation for modern LLMs.

Read moreContact Me

Let’s connect and build something great. I’d love to hear from you!